WebGL performance

Last week, after we added the tool to quickly add many objects into the space, we noticed how the renderer started having trouble to keep the FPS at a good rate.

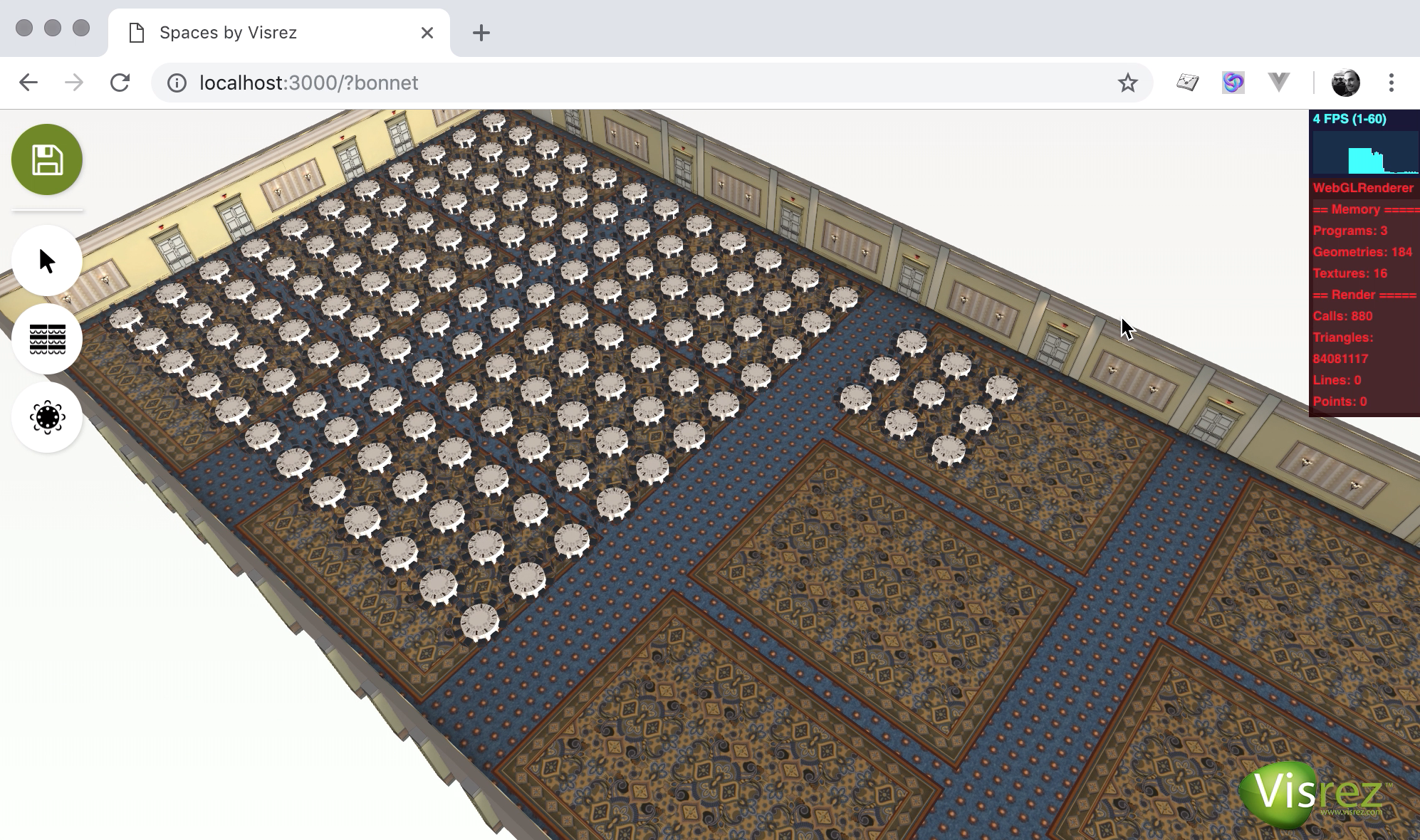

The example above shows that the complexity of the objects models becomes important when you add many of them, as you could expect. The FPS drop to 4 and we have more than 84M polygons on screen. User interactivity is lost at that point.

But our scene has a particularity we should be able to take advantage of: there’s a lot of duplication. We shouldn’t need to load each banquet into memory but load it only once and isolate the varying attributes for each instance (i.e. position & rotation). This is a common technique named object instancing.

Three.js has examples of object instancing. My laptop was able to load 150.000 cubes at 50 FPS.

So I started building an experiment with object instancing for our Spaces

Editor. Instancing required our objects to become a single mesh, and we had to

preserve the textures. So a good amount of stuff had to be updated in the code.

Eventually, I got instancing working on branch jb-objects-instanced. Sadly,

the performance not only didn’t improve but it got worse. I don’t have a good

explanation for this.

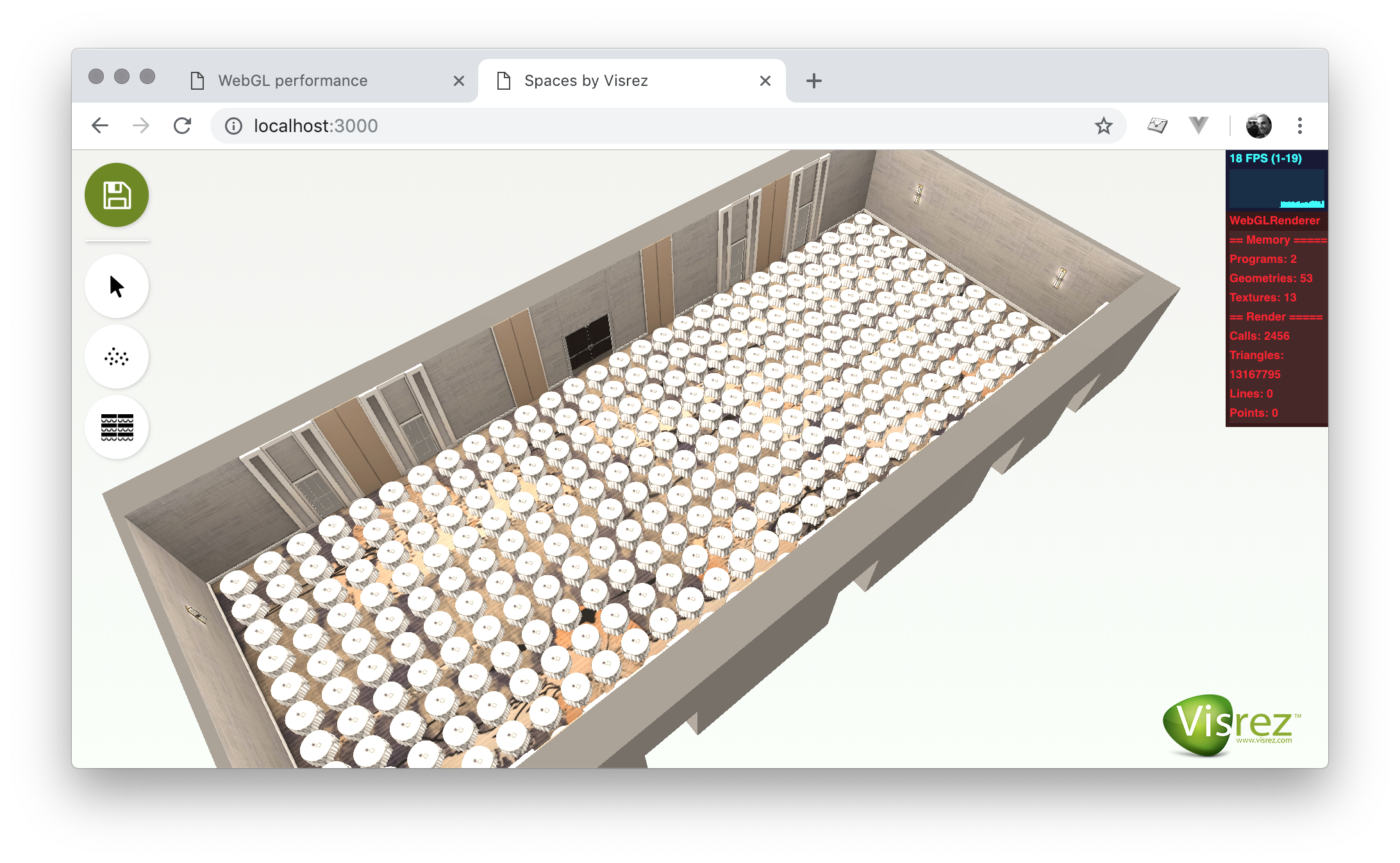

After more tinkering and more experiments. It turns out that the best performance is achieved by loading the raw model from GLTF after pre-processing it with gltf-pipeline. But the performance is still not good enough to handle big rooms.

Current status

Today, performance is a bit better than last week. But this isn’t resolved, it’s still a problem we’re going to face specially on big event spaces such as Bonnet Creek.

There’re more options to explore such as LOD systems, offcanvas rendering, etc. But there’s no guarantee of success on any of them.

This is crucial for the development of the Spaces Editor, that’s why I wanted to share the problem in case someone could make a contribution to solve it.

The code for the experiments can be found in these branches:

jb-objects-instanced, jb-objects-raw & jb-objects-singlemesh.